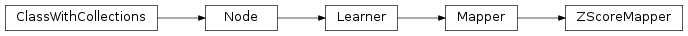

mvpa2.algorithms.hyperalignment.ZScoreMapper¶

-

class

mvpa2.algorithms.hyperalignment.ZScoreMapper(params=None, param_est=None, chunks_attr='chunks', dtype='float64', **kwargs)¶ Mapper to normalize features (Z-scoring).

Z-scoring can be done chunk-wise (with independent mean and standard deviation per chunk) or on the full data. It is possible to specify a sample attribute, unique value of which would then be used to determine the chunks.

By default, Z-scoring parameters (mean and standard deviation) are estimated from the data (either chunk-wise or globally). However, it is also possible to define fixed parameters (again a global setting or per-chunk definitions), or to select a specific subset of samples from which these parameters should be estimated.

If necessary, data is upcasted into a configurable datatype to prevent information loss.

Notes

It should be mentioned that the mapper can be used for forward-mapping of datasets without prior training (it will auto-train itself upon first use). It is, however, not possible to map plain data arrays without prior training. Also, for obvious reasons, it is also not possible to perform chunk-wise Z-scoring of plain data arrays.

Reverse-mapping is currently not implemented.

Available conditional attributes:

calling_time+: Time (in seconds) it took to call the noderaw_results: Computed results before invoking postproc. Stored only if postproc is not None.trained_dataset: The dataset it has been trained ontrained_nsamples+: Number of samples it has been trained ontrained_targets+: Set of unique targets (or any other space) it has been trained on (if present in the dataset trained on)training_time+: Time (in seconds) it took to train the learner

(Conditional attributes enabled by default suffixed with

+)Attributes

auto_trainWhether the Learner performs automatic trainingwhen called untrained. chunks_attrdescrDescription of the object if any dtypeforce_trainWhether the Learner enforces training upon every call. is_trainedWhether the Learner is currently trained. param_estparamspass_attrWhich attributes of the dataset or self.ca to pass into result dataset upon call postprocNode to perform post-processing of results spaceProcessing space name of this node Methods

__call__(ds)forward(data)Map data from input to output space. forward1(data)Wrapper method to map single samples. generate(ds)Yield processing results. get_postproc()Returns the post-processing node or None. get_space()Query the processing space name of this node. reset()reverse(data)Reverse-map data from output back into input space. reverse1(data)Wrapper method to map single samples. set_postproc(node)Assigns a post-processing node set_space(name)Set the processing space name of this node. train(ds)The default implementation calls _pretrain(),_train(), and finally_posttrain().untrain()Reverts changes in the state of this node caused by previous training Parameters: params : None or tuple(mean, std) or dict

Fixed Z-Scoring parameters (mean, standard deviation). If provided, no parameters are estimated from the data. It is possible to specify individual parameters for each chunk by passing a dictionary with the chunk ids as keys and the parameter tuples as values. If None, parameters will be estimated from the training data.

param_est : None or tuple(attrname, attrvalues)

Limits the choice of samples used for automatic parameter estimation to a specific subset identified by a set of a given sample attribute values. The tuple should have the name of that sample attribute as the first element, and a sequence of attribute values as the second element. If None, all samples will be used for parameter estimation.

chunks_attr : str or None

If provided, it specifies the name of a samples attribute in the training data, unique values of which will be used to identify chunks of samples, and to perform individual Z-scoring within them.

dtype : Numpy dtype, optional

Target dtype that is used for upcasting, in case integer data is to be Z-scored.

enable_ca : None or list of str

Names of the conditional attributes which should be enabled in addition to the default ones

disable_ca : None or list of str

Names of the conditional attributes which should be disabled

auto_train : bool

Flag whether the learner will automatically train itself on the input dataset when called untrained.

force_train : bool

Flag whether the learner will enforce training on the input dataset upon every call.

space : str, optional

Name of the ‘processing space’. The actual meaning of this argument heavily depends on the sub-class implementation. In general, this is a trigger that tells the node to compute and store information about the input data that is “interesting” in the context of the corresponding processing in the output dataset.

pass_attr : str, list of str|tuple, optional

Additional attributes to pass on to an output dataset. Attributes can be taken from all three attribute collections of an input dataset (sa, fa, a – see

Dataset.get_attr()), or from the collection of conditional attributes (ca) of a node instance. Corresponding collection name prefixes should be used to identify attributes, e.g. ‘ca.null_prob’ for the conditional attribute ‘null_prob’, or ‘fa.stats’ for the feature attribute stats. In addition to a plain attribute identifier it is possible to use a tuple to trigger more complex operations. The first tuple element is the attribute identifier, as described before. The second element is the name of the target attribute collection (sa, fa, or a). The third element is the axis number of a multidimensional array that shall be swapped with the current first axis. The fourth element is a new name that shall be used for an attribute in the output dataset. Example: (‘ca.null_prob’, ‘fa’, 1, ‘pvalues’) will take the conditional attribute ‘null_prob’ and store it as a feature attribute ‘pvalues’, while swapping the first and second axes. Simplified instructions can be given by leaving out consecutive tuple elements starting from the end.postproc : Node instance, optional

Node to perform post-processing of results. This node is applied in

__call__()to perform a final processing step on the to be result dataset. If None, nothing is done.descr : str

Description of the instance

Attributes

auto_trainWhether the Learner performs automatic trainingwhen called untrained. chunks_attrdescrDescription of the object if any dtypeforce_trainWhether the Learner enforces training upon every call. is_trainedWhether the Learner is currently trained. param_estparamspass_attrWhich attributes of the dataset or self.ca to pass into result dataset upon call postprocNode to perform post-processing of results spaceProcessing space name of this node Methods

__call__(ds)forward(data)Map data from input to output space. forward1(data)Wrapper method to map single samples. generate(ds)Yield processing results. get_postproc()Returns the post-processing node or None. get_space()Query the processing space name of this node. reset()reverse(data)Reverse-map data from output back into input space. reverse1(data)Wrapper method to map single samples. set_postproc(node)Assigns a post-processing node set_space(name)Set the processing space name of this node. train(ds)The default implementation calls _pretrain(),_train(), and finally_posttrain().untrain()Reverts changes in the state of this node caused by previous training -

chunks_attr¶

-

dtype¶

-

param_est¶

-

params¶